Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Warning: count(): Parameter must be an array or an object that implements Countable in /home3/reasonan/public_html/wp-includes/media.php on line 1206

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Warning: count(): Parameter must be an array or an object that implements Countable in /home3/reasonan/public_html/wp-includes/media.php on line 1206

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Warning: count(): Parameter must be an array or an object that implements Countable in /home3/reasonan/public_html/wp-includes/media.php on line 1206

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 2432

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Preamble I’m a big fan of Michael Pershan’s project Math Mistakes. If you’ve never checked it out, it’s worth exploring. And while I’m meddling with your life, here’s a tip for your entire department: Start each meeting by spending five minutes exploring one of the mistakes posted on Michael’s site. On a rotating basis, have one member of the department share a “provocative” math mistake from the ... ]]>

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Warning: count(): Parameter must be an array or an object that implements Countable in /home3/reasonan/public_html/wp-includes/media.php on line 1206

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Warning: count(): Parameter must be an array or an object that implements Countable in /home3/reasonan/public_html/wp-includes/media.php on line 1206

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Warning: count(): Parameter must be an array or an object that implements Countable in /home3/reasonan/public_html/wp-includes/media.php on line 1206

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 2432

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

I’m a big fan of Michael Pershan’s project Math Mistakes. If you’ve never checked it out, it’s worth exploring. And while I’m meddling with your life, here’s a tip for your entire department: Start each meeting by spending five minutes exploring one of the mistakes posted on Michael’s site. On a rotating basis, have one member of the department share a “provocative” math mistake from the blog (or maybe even one from his or her own classroom). And once duly provoked… Cue the discussion!

Amble

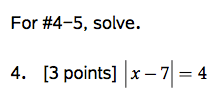

I included the following uninspiring question on a recent assessment:

The first two assessments I graded included the following responses:

Student 1

Student 2 ((My apologies for the retype. I added some feedback before snapping a photo.))

Comment Fodder

So here’s my question (er, set of questions) for you:

- Are these mistakes equally egregious?

- What misconceptions are contained in the first mistake? How would you address them?

- What misconception are contained in the second mistake? How would you address them?

- Would you grant any credit for either response, and why?

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Warning: count(): Parameter must be an array or an object that implements Countable in /home3/reasonan/public_html/wp-includes/media.php on line 1206

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Warning: count(): Parameter must be an array or an object that implements Countable in /home3/reasonan/public_html/wp-includes/media.php on line 1206

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 2432

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

I think I had a breakthrough today. I’ve been meaning to blog about the “assessment workflow” in my classroom, but I’ve been putting it off because (a) time is limited, especially at the end of the school year, and (b) I wanted to be mostly satisfied with my workflow before I shared anything (and I’m not there yet). I’ll write up the full details of ... ]]>

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Warning: count(): Parameter must be an array or an object that implements Countable in /home3/reasonan/public_html/wp-includes/media.php on line 1206

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Warning: count(): Parameter must be an array or an object that implements Countable in /home3/reasonan/public_html/wp-includes/media.php on line 1206

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 2432

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Warning: count(): Parameter must be an array or an object that implements Countable in /home3/reasonan/public_html/wp-includes/media.php on line 1206

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Warning: count(): Parameter must be an array or an object that implements Countable in /home3/reasonan/public_html/wp-includes/media.php on line 1206

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Warning: count(): Parameter must be an array or an object that implements Countable in /home3/reasonan/public_html/wp-includes/media.php on line 1206

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Warning: count(): Parameter must be an array or an object that implements Countable in /home3/reasonan/public_html/wp-includes/media.php on line 1206

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Warning: count(): Parameter must be an array or an object that implements Countable in /home3/reasonan/public_html/wp-includes/media.php on line 1206

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Warning: count(): Parameter must be an array or an object that implements Countable in /home3/reasonan/public_html/wp-includes/media.php on line 1206

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Warning: count(): Parameter must be an array or an object that implements Countable in /home3/reasonan/public_html/wp-includes/media.php on line 1206

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Warning: count(): Parameter must be an array or an object that implements Countable in /home3/reasonan/public_html/wp-includes/media.php on line 1206

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

I’ve been meaning to blog about the “assessment workflow” in my classroom, but I’ve been putting it off because (a) time is limited, especially at the end of the school year, and (b) I wanted to be mostly satisfied with my workflow before I shared anything (and I’m not there yet).

I’ll write up the full details of how assessment happens in my classroom (it’s been a major work-in-progress this year), but for now I want to share a tiny bit of background and then cut to today’s breakthrough.

The Background

Last Sunday I aired some of my thoughts and questions on this topic to @Mythagon. A few other thoughtful folks dropped by to share their own ideas and pose a few new questions for me to chew on. It left me with a clear sense (as have other conversations) that my assessment routine fails students in the category of self-feedback. I’ve been trying to foster more (and better) student reflection in our assessment routine for several months now, and those efforts are the reason I’ve pasted this quick reflection form…

…at the bottom of every new assessment I write. However, I was looking for a way to incorporate something that would require students to be more thoughtful (just shading in a couple of boxes doesn’t necessarily demand any careful consideration) and at the same time foster a growth mindset among my students.

The Breakthrough

At the end of today’s assessment (after grading them; more on that in the next post), just before collecting everything, I gave students the following directions:

Two minutes later, I collected the papers and we moved on to something else. Later in the day I went through the papers to confirm the results and scores, to get a sense of common mistakes (again, more on this workflow later), and (this part was new today!) to read the SP and STI comments.

Growth Mindset, Plans for the Future

It’s early, but I’m sensing that this could be one of the most important features of my classroom in terms of developing a growth mindset among my students. I love the blend of looking back to celebrate something and looking forward at something (and how) to improve.

I’m wondering now about the best way to incorporate this SP/STI reflection into the “aftermath” of all my assessments. The comments (see below for some samples) were physically all over the place, with some easier to read than others. It might be worth the time (and “lost” space on the page) to add a little box near the top of the assessment with room carved out for the SP and STI comments. I’ll tinker with the layout and post an update if I come up with anything promising.

Student Comments, Round 1

Here are the SP/STI reflections from the first eight papers in the stack today. Some comments are decidedly un-profound, but others are exactly what I was hoping for right out of the gate. I’m hopeful that my classroom will become a more thoughtful and reflective place through this routine. We’ll see how it goes next time.

Update from the Interwebz

]]>@mjfenton Trying the workflow today. Will be grading today. Instead of STI and SP, I used "Praise" & "Polish" (something we used earlier)

— Jedidiah Butler (@MathButler) May 9, 2014

I occasionally find myself wanting to read through one or more of those posts again. And sometimes I want to share them with a colleague or a student. Since I fully expect my future self will be even more lazy than my current self, I’ll drop some links to a few of my favorites below.

Enjoy!

- A Question: Why Don’t We Brag About Assessments? (March 11, 2013)

- Another Question Regarding Assessments (March 16, 2013)

- Some Reflections: How Assessment Impacts Curriculum (March 17, 2013)

- Creating Assessments: Three Types of Standards (March 23, 2013)

- Assessments: Synthesis Skills (March 28, 2013)

- Assessments: The Collateral Damage of SBG (April 27, 2013)

- SBG: My Standards, Assessments, and Thoughts on Grading (July 18, 2013)

P.S. If you’re just going to read three of them, make it #1, #4, and #5. Though by that point you’ll probably be hungry for more and you’ll wisely dive into #6.

]]>Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Warning: count(): Parameter must be an array or an object that implements Countable in /home3/reasonan/public_html/wp-includes/media.php on line 1206

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Warning: count(): Parameter must be an array or an object that implements Countable in /home3/reasonan/public_html/wp-includes/media.php on line 1206

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Warning: count(): Parameter must be an array or an object that implements Countable in /home3/reasonan/public_html/wp-includes/media.php on line 1206

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Warning: count(): Parameter must be an array or an object that implements Countable in /home3/reasonan/public_html/wp-includes/media.php on line 1206

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Warning: count(): Parameter must be an array or an object that implements Countable in /home3/reasonan/public_html/wp-includes/media.php on line 1206

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Warning: count(): Parameter must be an array or an object that implements Countable in /home3/reasonan/public_html/wp-includes/media.php on line 1206

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 2432

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Previously If you’re just tuning in, check out the first post in the series. Or Topic 5, if you fancy. (Topic 6 doesn’t exist. It’s a long story.) Algebra 1 • Topic 7 Assessment, Before There’s a point in the school year—toward the end of the first semester, usually—when my brain and my body get together to discuss whether there’s ... ]]>

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Warning: count(): Parameter must be an array or an object that implements Countable in /home3/reasonan/public_html/wp-includes/media.php on line 1206

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Warning: count(): Parameter must be an array or an object that implements Countable in /home3/reasonan/public_html/wp-includes/media.php on line 1206

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Warning: count(): Parameter must be an array or an object that implements Countable in /home3/reasonan/public_html/wp-includes/media.php on line 1206

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Warning: count(): Parameter must be an array or an object that implements Countable in /home3/reasonan/public_html/wp-includes/media.php on line 1206

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Warning: count(): Parameter must be an array or an object that implements Countable in /home3/reasonan/public_html/wp-includes/media.php on line 1206

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Warning: count(): Parameter must be an array or an object that implements Countable in /home3/reasonan/public_html/wp-includes/media.php on line 1206

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 2432

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

If you’re just tuning in, check out the first post in the series. Or Topic 5, if you fancy. (Topic 6 doesn’t exist. It’s a long story.)

Algebra 1 • Topic 7 Assessment, Before

There’s a point in the school year—toward the end of the first semester, usually—when my brain and my body get together to discuss whether there’s enough left in the tank to write something decent (be it a lesson, assignment, assessment, or whatever). Apparently, late in November 2011, the exchange must have been something like this:

Brain: “Okay, body, whaddya say? Let’s write a quality assessment for Topic 7, shall we?”

Body: “Must… sleep… so… tired…”

Brain: “What’s that? You think all we can manage is a pile of whatsit?”

Body: “Erghhhh… Where are we?”

Brain: “Okay, then, that’s the plan! Mediocrity, coming right up!”

At any rate, the result of my/our/their efforts was nothing to write home about (except maybe to lodge a complaint). I hereby present to you, two questions worth their weight in zero g:

The worst part of it? I based these questions off two I found on the CST, a multiple-choice test the quality and usefulness of which I regularly sneer at. A strange thing it is to despise one’s assessment muse.

Now then… On to happier times!

Algebra 1 • Topic 7 Assessment, After

The first thing that had to die: The multiple-choice-ness of the problems. I’m not opposed to all multiple-choice problems in the world, just most. There are some decent questions here and there. In fact, quite a number of the ones I see in preparing students for the AP Calculus exam strike me as worth their weight in… I don’t know, maybe salt. (Modern day market value, of course.)

But multiple-choice on a graphing linear equations assessment? Not a good fit, in my estimation. For starters, students with no idea of what they’re doing could luck their way into a perfect assessment score, especially when there are only two questions. Next, the format invites students to select an answer without showing much of their thinking. And beyond that, I left myself no room for questions that demand any measure of critical thought. (More on that in a moment.)

With those concerns at least partially in mind, I wrote a new assessment with four questions:

Here’s what I like: Goodbye multiple-choice format, hello (potential for) students showing a record of their thinking.

The questions aren’t amazing, and they’re quite limited in scope as they’re all really just begging for an equation in slope-intercept form (my beloved point-slope form comes up in Topic 8). However, I think they’re a dramatic improvement over the original.

Here’s what I still can’t stand: The assessment is still overwhelmingly focused on procedural understanding.

I suppose this might not change until I revisit/rewrite my skills and concepts list for Algebra 1 to include a more rich approach to graphing lines, but it’s still disappointing to look at a mid-November assessment and see a total lack of “explain-your-reasoning-this” or “explain-your-reasoning-that.”

Wrap Up

A quick word about #3 before moving on… I chose to display the ordered pairs so that students wouldn’t struggle with miscounting too-little-toner tick marks. When Desmos adds a “grid density” feature for improved (read: bolder) printing, I’ll consider removing those labels. In the meantime, they’re staying.

More than any assessment in this series so far, I’m super-excited to hear suggestions on how to address the weaknesses of the updated assessment. Have an idea? Please share!

Feb 8, 2014 Update

Great idea from @BridgetDunbar:

]]>@mjfenton rough draft-what abt some sort of matching like this: w/o coordinates/labeled axes-then defend answers pic.twitter.com/GeQsk9TZwb

— Bridget Dunbar (@BridgetDunbar) February 8, 2014

Warning: count(): Parameter must be an array or an object that implements Countable in /home3/reasonan/public_html/wp-includes/media.php on line 1206

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Warning: count(): Parameter must be an array or an object that implements Countable in /home3/reasonan/public_html/wp-includes/media.php on line 1206

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Warning: count(): Parameter must be an array or an object that implements Countable in /home3/reasonan/public_html/wp-includes/media.php on line 1206

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Warning: count(): Parameter must be an array or an object that implements Countable in /home3/reasonan/public_html/wp-includes/media.php on line 1206

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Warning: count(): Parameter must be an array or an object that implements Countable in /home3/reasonan/public_html/wp-includes/media.php on line 1206

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 2432

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Previously It all started with this post, and the most recent assessment adventure is here. Algebra 1 • Topic 5 Assessment, Before When I first made the shift to standards based grading, I threw together a list of skills as best as I could. When I considered equations involving absolute value, I figured, “What else would I want them to ... ]]>

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Warning: count(): Parameter must be an array or an object that implements Countable in /home3/reasonan/public_html/wp-includes/media.php on line 1206

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Warning: count(): Parameter must be an array or an object that implements Countable in /home3/reasonan/public_html/wp-includes/media.php on line 1206

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Warning: count(): Parameter must be an array or an object that implements Countable in /home3/reasonan/public_html/wp-includes/media.php on line 1206

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Warning: count(): Parameter must be an array or an object that implements Countable in /home3/reasonan/public_html/wp-includes/media.php on line 1206

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Warning: count(): Parameter must be an array or an object that implements Countable in /home3/reasonan/public_html/wp-includes/media.php on line 1206

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 2432

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

It all started with this post, and the most recent assessment adventure is here.

Algebra 1 • Topic 5 Assessment, Before

When I first made the shift to standards based grading, I threw together a list of skills as best as I could. When I considered equations involving absolute value, I figured, “What else would I want them to be able to do beyond solving a simple equation and solving a more advanced equation?” Fool. Anyway, here’s the pair of questions on the original Topic 5 assessment:

I was actually half-proud of the sequence of lessons I taught leading up to that original assessment. We built (or attempted to build) an understanding of equations involving absolute value by launching from a verbal approach with heaps of number line action, to a graphical approach with fantastic lines of intersection (and this was pre-Desmos!), before finally exploring an algebraic approach (the one and only representation I remember seeing as a student).

At any rate, a mediocre-to-decent set of lessons followed by a decidedly weak assessment left much to be desired.

Algebra 1 • Topic 5 Assessment, After

One issue I found in the original assessment that I tried to address in the update is that I had very little sense of who was struggling with the algebra and who was struggle numerically with the concept of absolute value. In other words, pick a kid who failed the assessment. Was his weakness only in solving equations, or was he unable to even evaluate expressions involving absolute value.

With that question in mind, I added three questions to the front end of the assessment:

#1-2 are nothing special, but they did give me a better sense of how deep a particular student’s struggles went. And #3 is standard assessment rewrite fodder for me, as you’ve no doubt seen in previous one-minute makeovers. Nothing like a little “explain the error” and “redo it correctly” to see what’s going on inside a student’s head.

The last two questions on the updated assessment are literally cut-and-pasted from the original assessment:

I decided the main point of this assessment was still, “Can you solve an equation involving absolute value or not?” (Is that a worthwhile goal? I’d love to hear your thoughts in the comments.)

Wrap Up

I’m still largely dissatisfied with this assessment, though I don’t really know what to do with it. I feel like our in-class approach to this topic—warts and all—was richer than the assessment would suggest. In the next one-minute makeover I’ll explore some options for incorporating questions that dig into students’ understanding of this topic from a verbal and a graphical approach. And while I’m at it, the quick nod at a numerical approach could be strengthened, possibly by shifting evaluation from out-of-context “Hey, what’s the value of this?” questions to determining whether particular x-values are solutions of a given equation (via substitution).

Interested in the lessons and assignments that preceded this assessment. The brave shall enter through this door.

Anyway, more reflecting and tinkering is on the way. In the meantime, drop your own thoughts and questions in the comments below.

]]>Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Warning: count(): Parameter must be an array or an object that implements Countable in /home3/reasonan/public_html/wp-includes/media.php on line 1206

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Warning: count(): Parameter must be an array or an object that implements Countable in /home3/reasonan/public_html/wp-includes/media.php on line 1206

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Warning: count(): Parameter must be an array or an object that implements Countable in /home3/reasonan/public_html/wp-includes/media.php on line 1206

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Warning: count(): Parameter must be an array or an object that implements Countable in /home3/reasonan/public_html/wp-includes/media.php on line 1206

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Warning: count(): Parameter must be an array or an object that implements Countable in /home3/reasonan/public_html/wp-includes/media.php on line 1206

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 2432

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Previously… If you’re just tuning in, consider checking out the first post in the series, or the most recent post. Algebra 1 • Topic 4 Assessment, Before We’re drifting into a little section of my Algebra 1 curriculum that I’m at least a little bit ashamed of. I have no one to blame but myself since I put the textbook ... ]]>

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Warning: count(): Parameter must be an array or an object that implements Countable in /home3/reasonan/public_html/wp-includes/media.php on line 1206

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Warning: count(): Parameter must be an array or an object that implements Countable in /home3/reasonan/public_html/wp-includes/media.php on line 1206

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Warning: count(): Parameter must be an array or an object that implements Countable in /home3/reasonan/public_html/wp-includes/media.php on line 1206

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Warning: count(): Parameter must be an array or an object that implements Countable in /home3/reasonan/public_html/wp-includes/media.php on line 1206

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Warning: count(): Parameter must be an array or an object that implements Countable in /home3/reasonan/public_html/wp-includes/media.php on line 1206

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 2432

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

If you’re just tuning in, consider checking out the first post in the series, or the most recent post.

Algebra 1 • Topic 4 Assessment, Before

We’re drifting into a little section of my Algebra 1 curriculum that I’m at least a little bit ashamed of. I have no one to blame but myself since I put the textbook on the shelf and created lessons, practice, and assessments from scratch. Big plans for improvement in the months ahead, but for now, warts and all…

Here are the two-and-only questions from Form A of my old Topic 4 assessment:

Oh, the shame! I’ll talk more about the gap between this assessment and all-that-is-decent-in-this-world in a moment. For now I’ll just remark that what these questions actually demand of students is so far below what I originally intended that they are essentially useless as an assessment tool in Algebra 1.

Algebra 1 • Topic 4 Assessment, After

The first major flaw in the original assessment is that the questions appear out of nowhere and drift away from our attention just as suddenly. So I replaced these two unrelated questions with a sequence of five questions related to a single scenario. Here are the questions from the new Form A:

My initial intention was for students to use expressions and/or equations to answer the questions on the assessment. On the original version, almost none of my students approached the problems algebraically. Many were able to answer the questions (and many were not), but nearly everyone who answered correctly did so with nothing more than some numerical tinkering.

While I’m not opposed to numerical tinkering (quite the contrary; I think it’s a fantastic practice for students), in this class and on this assessment I was hoping to see whether they could write an expression to model a situation and use the expression to answer another question or two in an efficient manner.

With the original assessment, this was a lost cause. With the updated version (particularly #3-5) I was able to measure at least part of what I set out to measure.

Oh, But It’s Still So… (aka “Wrap Up”)

While my current assessment is an improvement over the first version, it still strikes me as terribly inadequate. Here we are, at the end of a unit on linear modeling, and there’s a massive void when it comes to two hugely important things: (1) At no point is any connection made between the verbal/numerical/algebraic representations and a graphical one (and it would be so easy to fix this!), and (2) The scenario is decidedly boring and contrived.

I have ideas for how to address #1, but am at a loss for how to remedy #2 in the space of a single-page assessment. More to think about for the next round of revisions.

One additional minor/medium flaw I see in the updated version is this: At no point do I ask students to explain their reasoning, justify their thinking, etc. (And word on the street is that those are cool things to do.)

Until next time…

]]>Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Warning: count(): Parameter must be an array or an object that implements Countable in /home3/reasonan/public_html/wp-includes/media.php on line 1206

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Warning: count(): Parameter must be an array or an object that implements Countable in /home3/reasonan/public_html/wp-includes/media.php on line 1206

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Warning: count(): Parameter must be an array or an object that implements Countable in /home3/reasonan/public_html/wp-includes/media.php on line 1206

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Warning: count(): Parameter must be an array or an object that implements Countable in /home3/reasonan/public_html/wp-includes/media.php on line 1206

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Warning: count(): Parameter must be an array or an object that implements Countable in /home3/reasonan/public_html/wp-includes/media.php on line 1206

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Warning: count(): Parameter must be an array or an object that implements Countable in /home3/reasonan/public_html/wp-includes/media.php on line 1206

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Warning: count(): Parameter must be an array or an object that implements Countable in /home3/reasonan/public_html/wp-includes/media.php on line 1206

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Warning: count(): Parameter must be an array or an object that implements Countable in /home3/reasonan/public_html/wp-includes/media.php on line 1206

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Warning: count(): Parameter must be an array or an object that implements Countable in /home3/reasonan/public_html/wp-includes/media.php on line 1206

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 2432

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Previously… The first post in the series is here. The previous post (Topic 2, Part 2) is here. Algebra 1 • Topic 3 Assessment, Before When I first drew up this assessment, my goals were to evaluate students’ ability at simplifying linear expressions and solving linear equations. Here’s what the two questions of Form A looked like: I had the ... ]]>

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Warning: count(): Parameter must be an array or an object that implements Countable in /home3/reasonan/public_html/wp-includes/media.php on line 1206

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Warning: count(): Parameter must be an array or an object that implements Countable in /home3/reasonan/public_html/wp-includes/media.php on line 1206

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Warning: count(): Parameter must be an array or an object that implements Countable in /home3/reasonan/public_html/wp-includes/media.php on line 1206

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371

Warning: count(): Parameter must be an array or an object that implements Countable in /home3/reasonan/public_html/wp-includes/media.php on line 1206

Deprecated: Function get_magic_quotes_gpc() is deprecated in /home3/reasonan/public_html/wp-includes/formatting.php on line 4371